|

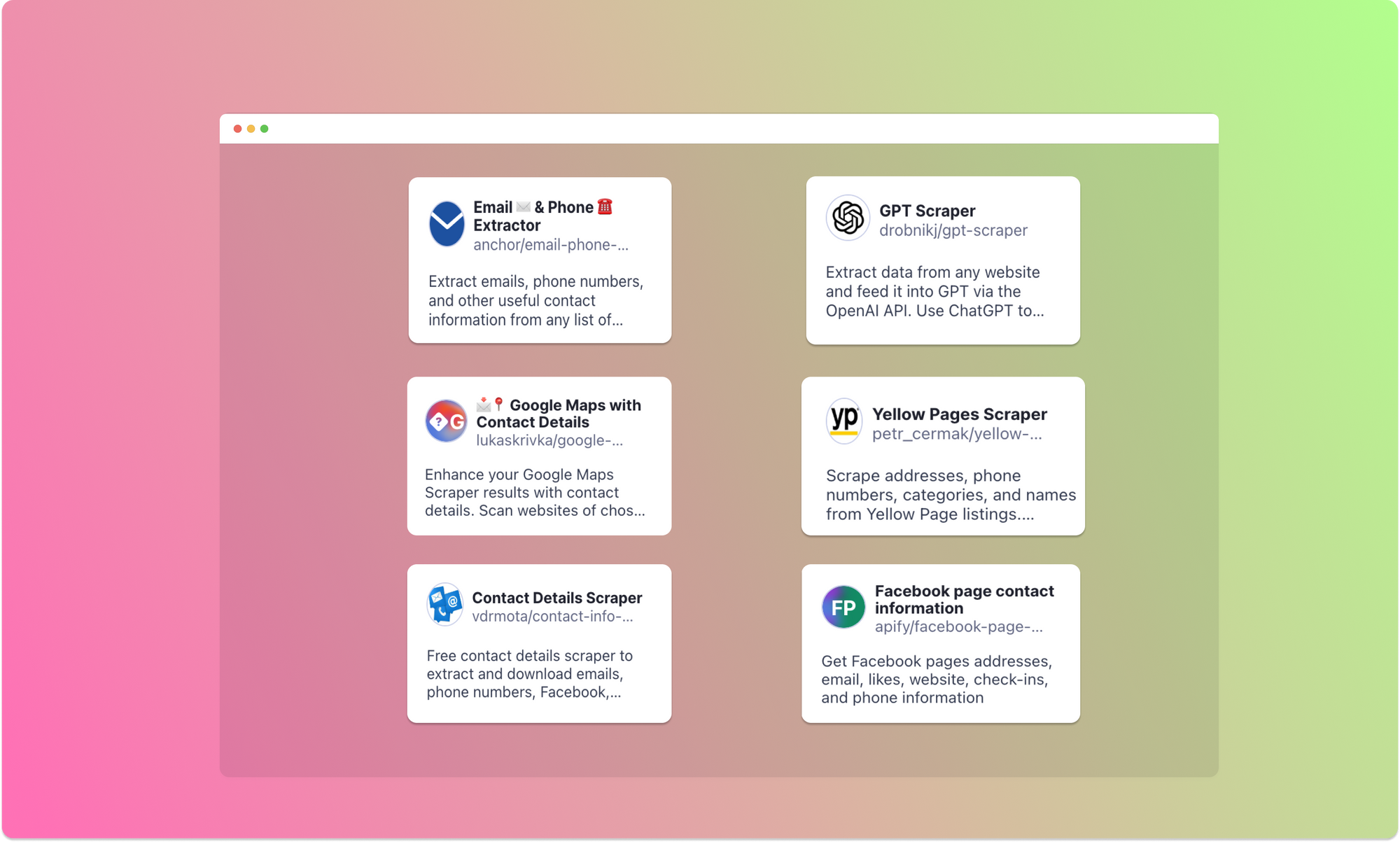

Guide To Choosing The Appropriate Internet Scraping Company Apify has excelled as a service partly as a result of its first-rate professionals who remain with you all throughout. From preliminary evaluation of your needs to last Click for source data distribution, you will be served by some of the world's most intelligent information professionals on internet scuffing and automation. Nevertheless, it's not just mechanical information removal that you get; ScrapeHero has implemented AI-based top quality checks to examine data high quality problems and fix them. Without compromising quality, ScrapeHero looks after complex JavaScript/AJAX sites, CAPTCHA, IP blacklisting transparently. With this service, you are most likely to simply unwind and loosen up since it will take care of everything.

Snowboard waxing tips guide: How to wax a snowboard - Red BullSnowboard waxing tips guide: How to wax a snowboard. Posted: Mon, 02 Aug 2021 07:00:00 GMT [source] Data Top Quality And AccuracyThe collection supports XPATH and CSS selectors, which make it less complicated to target particular components in HTML. The rate at which your web scratching solution can acquire and refine information substantially influences the efficiency of your data-driven decision making. The high quality of your understandings, the dependability of your equipment finding out designs and the performance of your organization method are directly tied to the precision of your information. Our US-based expert services group will certainly work very closely with you to essence and structure your internet data to fulfill your exact demands. Evaluate the framework of the raw internet information that the web scuffing device removes, to ensure the data is suitabled for use to resolve your analytics make use of situations. The quantity of data you need to scuff will certainly establish the type of web scraping solution you'll require.

The amount of information you need based on the dimension of your project is really vital.Web scuffing is an effective device for information removal, but it comes with obligations.This seems a simple job, as there are countless on-line programs and video products available that enlighten individuals on how to develop a web scraping manuscript in Python or JavaScript.Many clients favor ScrapeHero due to the fact that it does all the benefit them without the requirement for any type of added software program, equipment, scuffing tools, or capacities on their component.

Datahut is a superb internet scratching solution, just as great as any type of you will get. Its experienced team of information experts provides costs different data outcomes using "Finest of Type". This enables you to stop bothering with accessibility to data and focus on the business knowledge stemmed from the data. If you desire a service that effectively obtains the data you desire at affordable prices, do not look any kind of better. It is extremely challenging to pick one according to your demands, budget plan, and various other concerns. You can see more trusted firms and media that referenced AIMultiple.

Variables To Take Into Consideration When Choosing A Web Scratching ToolThey use a flurry of formats for distribution can be CSV, JSON, JSONLines, or XML. Lots of websites may not permit you to extract and scrape their data. There are many methods to fix this issue, however it is quite costly. Data scuffing service provider has to utilize the appropriate combination of modern technologies to conserve cash. Whether you need consumer details or data on the interior market framework or sale patterns, anything Best API integration services can be bought from an internet scraping service business. So conserve your time and nerves, and allow a team of experts deal with all your information requirements.Where To Eat, Beach, Sleep, Play & Repeat In Puerto Viejo, Costa ... - UPROXXWhere To Eat, Beach, Sleep, Play & Repeat In Puerto Viejo, Costa .... Posted: Mon, 24 Apr 2023 07:00:00 GMT [source]

0 Comments

Just How Huge Is Big Data? A Within Look At It Extra importantly, the cloud permits business to tap into powerful computing ability and store their data in on-demand storage to make it a lot more safe and quickly obtainable. Prior to we get to the dimension of big data, let's first specify it. Large information, as defined by McKinsey & Business refers to "datasets whose dimension is beyond the ability of regular data source software application devices to catch, store, take care of, and analyze." The definition is liquid. It does not set minimum or maximum byte limits because it is assumes that as time and innovation advancement, so also will certainly the dimension and variety of datasets.

Ever since, NoSQL databases have been commonly taken on and are currently made use of in enterprises throughout markets.IBM research study says 2.5 quintillion bytes of information are developed daily and that 90 percent of the globe's data has actually been developed in the last two years.Learn exactly how big language versions work and the different methods which they're used.The amount of data created by people and makers is expanding significantly.In 2021, a huge section of retail and marketing businesses (27.5%) specified that cloud organization knowledge was important to their operations.

Many services rely on large data technologies and remedies to accomplish their goals in 2021. In 2021, corporations spent around $196 billion on IT information facility remedies. Venture spending on IT data center systems enhanced by 9.7% from 2020. IT information center systems total international costs can increase by 9.7% from 2020. In this short article, we will discuss big information on an essential level and define common concepts you could come across while investigating the topic. We will additionally take a high-level look at a few of the procedures and modern technologies currently being made use of in this area. But it wasn't always a very https://nyc3.digitaloceanspaces.com/apiintegrations/Web-Scraping-Services/api-integrations/travel-tourism-sector-usage-of-internet-scuffing27377.html easy sell, as the biggest change administration hurdles consisted of getting company personnel to make use of the device for the very first time. " Whenever I get a brand-new group, first we have a conversation where I discover more regarding their needs and objectives to see to it Domo is the right device for them," Janowicz says. The secret sauce behind the software program, offered by Domo, look out the software program sends when information is updated or when particular limits are activated that require action by the custodian of the data, states Janowicz. As with most visualization tools, Domo makes La-Z-Boy's data in an instinctive graphical control panel that's very easy to realize.

Big Information Sector DataSince you know the current data and just how big information influences the industry, let's dive deeper. According to huge data statistics, cyber frauds have actually risen 400% at the start of the pandemic. In 2015, the market had actually currently reached a market dimension of $12 billion. Since 2013, a whopping 64% of the worldwide financial market had actually currently incorporated Big Information as a part of their framework. The market of Big Information analytics in financial is readied to reach $62.10 billion by 2025. Nevertheless, it's not entirely unexpected, thinking about the tech gigantic dominates the marketplace with a 91.9% share. In addition, configuration modifications can be done dynamically without affecting inquiry performance or information accessibility. HPCC Systems is a large data handling platform created by LexisNexis prior to being open sourced in 2011. Real to its full name-- High-Performance Computing Cluster Equipments-- the technology is, at its core, a cluster of computer systems developed from commodity hardware to process, manage and provide huge data. Hive works on top of Hadoop and is made use of to process organized data; even more particularly, it's used for data summarization and evaluation, as well as for inquiring huge amounts of information.Breaking Data Observability MisconceptionsBig information analytics assists firms comprehend their competitors better by supplying better insights about market trends, market problems, and other parameters. With the appearance of large information innovation, the need to procedure and assess large data economically and much faster is important for enterprises worldwide. One of the emerging patterns is the increasing fostering of large information analytics across ventures.LLM integration takes Cloudera data lakehouse from Big Data to Big AI - VentureBeatLLM integration takes Cloudera data lakehouse from Big Data to Big AI.

Posted: Tue, 06 Jun 2023 07:00:00 GMT [source]

Internet Information Extraction For Crafting Email Database For Affiliate Marketers Only with a good knowledge of the marketplace, you can clear out insights right into what marketing choices will certainly get your business ahead. The procedure of web scuffing is understandable, yet it's certainly not easy to construct one from scratch for non-technical individuals. Fortunately, there are lots of cost-free web information removal devices available many thanks to the advancement of big information. Keep tuned, there are some wonderful complimentary scrapers I would certainly like to advise to you. One day, you take place to fulfill your good friend Mr. Article source N, and he suggests you that he knows a couple of people who can tell a much bigger audience concerning just how great your product is. Your good friend N right here is similar to an associate network which offers a platform for sellers and promoters to find touching each various other.

Reporting - Money transferred to and from overseas: International ... - AUSTRACReporting - Money transferred to and from overseas: International ....

Posted: Wed, 14 Dec 2022 08:00:00 GMT [source]

Usage Paid Ads To Gather Email AddressesWith internet scratching, you can have your computer system complete all those tiresome tasks for you immediately. This provides marketers even more time to concentrate on other a lot more imaginative tasks. Internet scraping is much cheaper than participating in a hands-on process of data mining. B2B scraping tools provide the required services at a sensible price.Artificial intelligence - implications for real estate - JLLArtificial intelligence - implications for real estate. Posted: Thu, 22 Jun 2023 16:09:48 GMT [source] Product Rate Forecast To Capture Individual Attention Or Evaluating Customer Attributes To Modify WebsitesTrust fund of the end customers in one of the most necessary thing which all the businesses across the globe aim to accomplish. As we discussed in the previous section, that directing the web traffic to unimportant items will certainly bring about fewer conversions however routing website traffic to a deceitful item is a much graver criminal activity. This will certainly not just result in less conversions however likewise you, losing all the trust of your internet traffic.

Take advantage of the simplicity and efficiency of e-mail advertising with very targeted email lists from us.Allow's consider some industries that the future of huge information and internet crawling will affect.Most salespeople, however, are still looking for leads on the Web in a conventional, hands-on means.This enables you to remove various products of details that are definitely structured.The solution depends upon exactly how you plan to utilize the information and whether you adhere to the terms of use of the site.

A link scrape can remove information from any kind of web page right into a downloadable spread sheet. With the variety scratching any URL can provide, you can use this component to complete API integration case studies a myriad of jobs consisting of scratching total market statistics, on-line remarks, and much more. Luckily, there are many federal government internet sites or other resources of nationwide data that can influence your market.

2023 And Beyond: The Future Of Data Scientific Research Told By 79,306 Individuals The Pycharm Blog11/22/2023

What Is Data Scraping? An Overview Of Techniques And Devices Apify David BartonHowever, one difference the current development in AI made was a different standard for the upcoming internet scuffing startups. New internet scuffing companies no more have to start from ground zero and can begin their options with AI in mind. Obviously, after this year, you can likewise expect a lot of abuse of the term, so stay SEO-vigilant on the internet. As the issue of information privacy comes to be significantly pressing, websites, on the other hand, will certainly continue executing more stringent procedures to shield against internet scuffing. This can include making use of web browser fingerprinting, IP blocking, placing information behind logins, and more durable safety steps to avoid unapproved accessibility to their data. State companies have additionally started openly acknowledging the worth of automated internet information collection.

Likewise, you might have stumbled onto a portal that assembles the offerings and prices of many suppliers right into a single, practical area.The future of information scuffing is most definitely bright and glossy packed with great deals of new chances for organizations and firms.Just with a great understanding of the market, you can eliminate understandings into what advertising and marketing choices will get your company in advance.Scratching bots will gladly squeeze out any type of get in touch with details they can locate in site scraping and provides you with all the call you need.Organizations are increasingly leveraging the powerful capacities of AI and ML to automate data analytics, opening important insights that were previously unattainable.

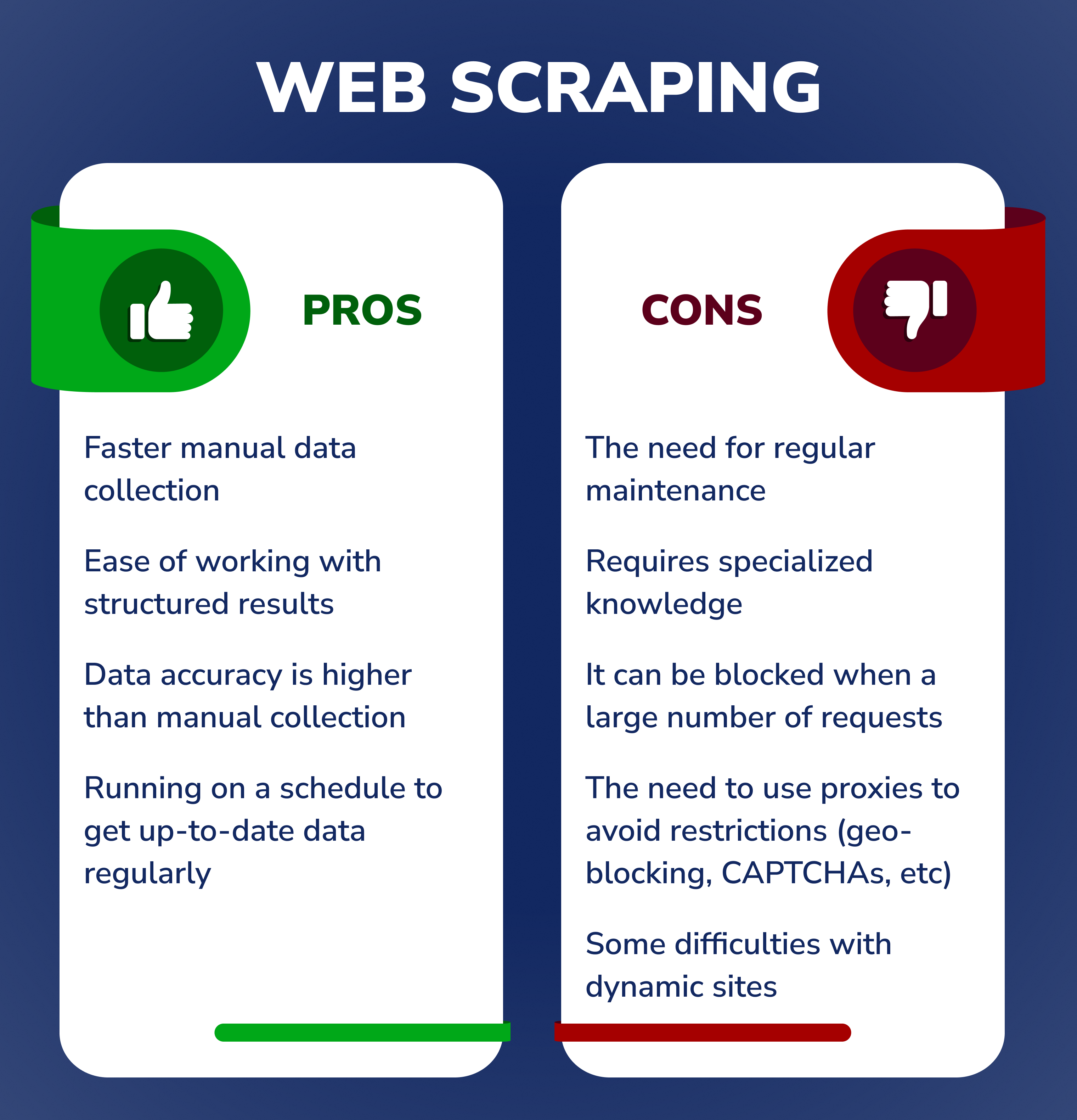

Information scratching is legal and advantages a great deal of organizations in countless methods. Right here we check out some of the use instances of information scuffing which also highlights their advantages. And finally, rate scuffing which is among the use cases of information scraping can Best API integration services assist services to evaluate prices out there amongst competitors and plan. The key goal of data scraping is to remove information from websites via automated procedures, drawing information from diverse sources for various functions. As our globe becomes significantly data-driven, data scraping initiatives have gained grip in assisting companies to make enlightened choices, display fads, and stay in advance of the competition. There are numerous internet scratching devices available, such as FlightStats, Wikibuy, Internet Scrape Chrome expansion, search engine optimization Spider device Screaming Frog, and Ahrefs Website Explorer. These devices assist make web scuffing a lot more obtainable, making it possible for customers to collect valuable information from web sites for different applications, such as market research, sentiment evaluation, and competitor tracking. The two primary techniques used in data scuffing are web scuffing and screen scraping. Internet scratching concentrates on extracting information from sites, while screen scuffing captures information from aesthetic interfaces.

What Is Web Scuffing? An Extensive GuidePersonalized Data Removal Services-- Software development business like Iterators can help your business with certain information removal requires, and guarantee automation of the whole process also. This option can be time and money-efficient, particularly if you have a data scuffing job that is advanced or wanders off the ruined track. Scuff openly available data and stay clear of using it for business gain. And ensure that your scrapers do not impact the site's efficiency. Data scraping is the computerized process of extracting data from websites and transforming it into a style that can be quickly read and evaluated. By using a web. scraper, big quantities of data can be acquired promptly and successfully, permitting additional analysis or storage space for future use. Each approach has its own collection of tools and applications, making information scuffing a functional option for data celebration and evaluation. In this write-up, we will certainly discuss the top 10 scraping devices in 2023, their functions, and just how to choose the right one for your needs. We will certainly additionally explore the future of web scuffing and the fads that are shaping the industry. So, whether you are an experienced internet scraper or simply starting, this short article will certainly give you with valuable understandings and help you make notified decisions when choosing the most effective scuffing device for your demands. In recent times, web scraping and different data have ended up being significantly preferred among companies and people alike. These information sources give a riches of information that can be used to acquire understandings, make educated choices, and stay in advance of the competition. The significant quantity of data that is frequently updated will certainly most certainly influence information scuffing. Web scratching strategy adheres to a special means of drawing out and harvesting data from the web, which comes really practical for several businesses and people that remain in a demand of normal and fresh information. Allow's consider some sectors that the future of large information and web crawling will certainly impact. Regardless of these difficulties, internet scraping of social media sites and e-commerce will certainly continue to be a popular fad in 2023. The benefits of internet scraping these internet sites exceed the challenges, and businesses remain to locate new and cutting-edge methods to gather the data they need.Why Work Require Data Scratching?Web scuffing has come a lengthy method, with recent growths including listening to data feeds from web servers and JSON becoming an usual transportation storage system between customers and web servers. With the blowing up appeal of AI tools and artificial intelligence, it's secure to claim that their sis market, data science, is below to remain permanently. The realization of these benefits has actually driven the fostering of other AI applications such as artificial intelligence and deep understanding, truth future of information science. From changing data to meet your distinct demands to successfully navigating anti-scraping procedures, the most effective information removal experts possess the abilities and experience to ensure your success. The data drawn out must be made use of to obtain understanding right into market conditions, make far better decisions, and create better approaches. Normally, internet scraping is thought about legal as long as you are not breaching any kind of copyright legislations or information protection guidelines. It is necessary to be aware of the legislations in your territory so that you can guarantee you continue to be within the boundaries of the regulation.Pension funds could use AI to cut costs, increase returns, says report - CointelegraphPension funds could use AI to cut costs, increase returns, says report.

Posted: Tue, 17 Oct 2023 07:43:54 GMT [source]

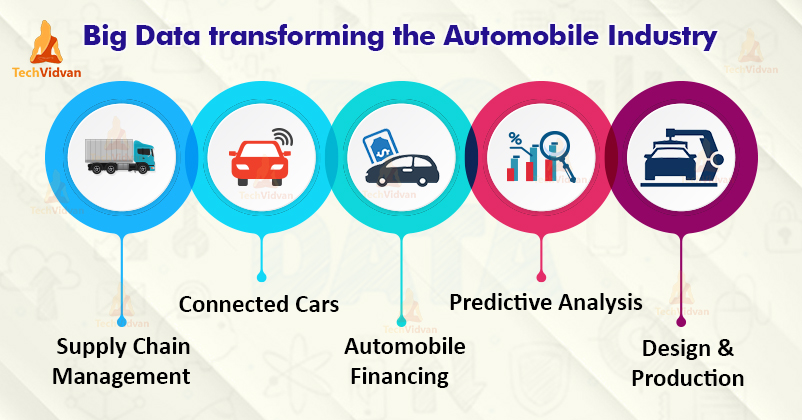

Exactly How Large Data Can Be Made Use Of In The Vehicle Industry With sensors fitted to the elements of premium cars, GPS area, and navigation-related info, automobile suppliers can pre-empt the demands of the consumers. As an example, if a component has been falling short in its working and needs to be changed, manufacturers are conveniently notified by these sensors and area information. And, an all new part is already shipped and made available to the nearest dealer location. Nevertheless, safeguarding consumer details and using it responsibly must be the main goal of any kind of automakers. In a study, it has actually been noted that 61% of automobile marketing professionals are very most likely to utilize area information to examine consumer habits at car dealerships. On top of that, the various other suggested services can be relocated to the small screening stage in the real world. The blockchain has several difficulties to deal with, and scalability is taken into consideration the best. Vehicle sales have grown considerably from 2005 to 2020, in addition to the modern technology inside them. Using blockchain for data collection in the automobile industry has numerous ramifications from a scalability angle. As a result, it is not a practical choice for data collection, as a result of its inadequate scalability; hence, the message-receiving feature should be enhanced. Referral checked out scalability thanks to the separate ledgers, but the boost in the amount of data gathered produces troubles.

Carmakers are failing the privacy test. Owners have little to no ... - CTV NewsCarmakers are failing the privacy test. Owners have little to no .... Posted: Wed, 06 Sep 2023 07:00:00 GMT [source]

Question FormBy utilizing a large amount of proxies, the web scraper can disperse the demands uniformly via the IP address pool without reaching the web site's executed information demand restriction. For web information collection in the automotive market, PromptCloud is one of the leading picks. It is fair to presume that with the upcoming even more technical developments in the area, the data-backed monitorings will form the technique of the foreseeable vehicle sector. Let's really hope; now, you have a mutual understanding of exactly how the automotive industry is currently benefiting from the web scuffing procedure. Four of these recommend parallel extraction, while two do not define the precise procedure.

Such an application can be useful to customers that are wanting to purchase a brand-new vehicle.They take advantage of client feedback and constantly improve their items based upon the information.Taking into consideration insurance companies and authorities divisions may utilize the information collected by sensors to reconstruct accidents or events as a whole.The disparity between the codification of the three writers was very little and fixed effectively.

In recent initiatives, network technologies have actually been made use of to improve the scalability of blockchain. Nonetheless, these innovations are still in the early stages of growth, and the inquiry of how to create light-weight and scalable blockchain networks remains a significant worry. In addition, other options are utilizing the lightweight blockchain method that has a scalability positioning; for example, Referral considered the Lightweight Consensus Algorithm for Scalable IoT Company Blockchain to minimize the hold-up time. The Lightweight Scalable Blockchain from Recommendation takes care of the blockchain dynamically, so as not to overload it with accumulated information; Reference offers likewise as a basis for the previously mentioned articles on the light-weight blockchain. Another challenge to think about is that, as lorries are fast-moving things, there is not constantly a great link with the receiver.

Scraping Vins For Historic Data2 files recommend that reviewers develop an approach for obtaining unpublished data. Two textbooks suggest reviewers to prepare beforehand which information they will need to remove for their evaluation. One book makes an optional recommendation, relying on the variety of included researches. Regarding the development of data extraction forms, the most regular suggestion in the evaluated textbooks is that reviewers should create a personalized removal form or adjust an existing one to fit the needs of their review (6/11).AI in Action - Stories - MicrosoftAI in Action - Stories.

Posted: Tue, 04 Apr 2023 07:00:00 GMT [source] Cost Contrast Engines And Vehicle Information GatheringThis capability will necessitate recurring user testing after first Go to this site distribution to determine improvement opportunities, along with continuous software program updates, releases, and features, to make sure high customer engagement. If done appropriately, the upgrades will permit gamers to produce separating "trademark moments," comparable to those iPhone customers now enjoy after OS upgrades and brand-new application launches. Money making must focus on what customers desire and their willingness to pay. To connect spaces regarding end users' perceived worth, business should hold customer centers to draw Find more information up particular facets of services and identify absent components to resolve pain factors.

25+ Impressive Huge Information Stats For 2023 Consumption structures like Gobblin can assist to aggregate and stabilize the outcome of these devices at the end of the consumption pipe. Before we check out these 4 process categories carefully, we will take a minute to discuss clustered computer, an important technique used by the majority of huge information services. Establishing a computing collection is usually the foundation for technology utilized in each of the life process stages. Large data troubles are frequently distinct due to the large range of both the resources being processed and their relative high quality.

How Companies Can Use Big Data to Make Better Action Plans for ... - Hospitality NetHow Companies Can Use Big Data to Make Better Action Plans for .... Posted: Fri, 13 Oct 2023 07:56:00 GMT Unleash the Power of Data with Our Web Scraping Service [source] In April 2021, 38% Of International Services Bought Smart AnalyticsMaking use of artificial intelligence, they then developed their algorithms for future trends to forecast the variety of upcoming admissions for different days and times. Yet data without any evaluation is rarely worth a lot, and this is the other component of the large https://zenwriting.net/coenwiyzum/information-scraping-wikipedia-as-data-volumes-take-off-the-requirement-to data process. This evaluation is described as information mining, and it undertakings to search for patterns and abnormalities within these large datasets.

A lot of enterprise business, regardless of industry, utilize around 8 clouds typically.62.5% of individuals stated their organization assigned a Principal Data Police officer, which suggests a fivefold rise because 2012 (12%).Batch processing is most useful when handling large datasets that need quite a bit of computation.Multimodel databases have actually also been created with support for different NoSQL approaches, as well as SQL in some cases; MarkLogic Web server and Microsoft's Azure Universe DB are instances.Other countries in the lead were Germany and the United Kingdom.

At the time, it soared from 41 to 64.2 zettabytes in one year. Poor information high quality sets you back the United States economic climate approximately $3.1 trillion yearly. In the following 12 to 18 months, forecasts show that international financial investments in smart analytics are expected to accomplish a slight increase. This typically implies leveraging a distributed documents system for raw information storage. Solutions like Apache Hadoop's HDFS filesystem allow large quantities of information to be written throughout multiple nodes in the collection. This makes sure that the information can be accessed by calculate resources, can be packed right into the collection's RAM for in-memory procedures, and can beautifully take care of component failures. [newline] Other distributed filesystems can be used in place of HDFS including Ceph and GlusterFS. The sheer scale of the info refined aids specify large information systems. These datasets can be orders of magnitude bigger than typical datasets, which requires extra believed at each stage of the processing and storage life process. Analytics guides much of the decisions made at Accenture, says Andrew Wilson, the consultancy's former CIO.

Ethical Internet Data Collection Initiative Launches Certification ProgramAt the end of the day, I anticipate this will certainly create more smooth and integrated experiences throughout the entire landscape. Apache Cassandra is an open-source data source made to manage dispersed data across numerous information centers and hybrid cloud atmospheres. Fault-tolerant and scalable, Apache Cassandra provides dividing, duplication and consistency tuning capacities for large-scale organized or unstructured information sets. Able to process over a million tuples per second per node, Apache Storm's open-source calculation system focuses on refining dispersed, unstructured information in real time.AI and Big Data Expo Global Returns to London: A Glimpse into the ... - insideBIGDATAAI and Big Data Expo Global Returns to London: A Glimpse into the ....

Posted: Thu, 19 Oct 2023 09:59:00 GMT [source]

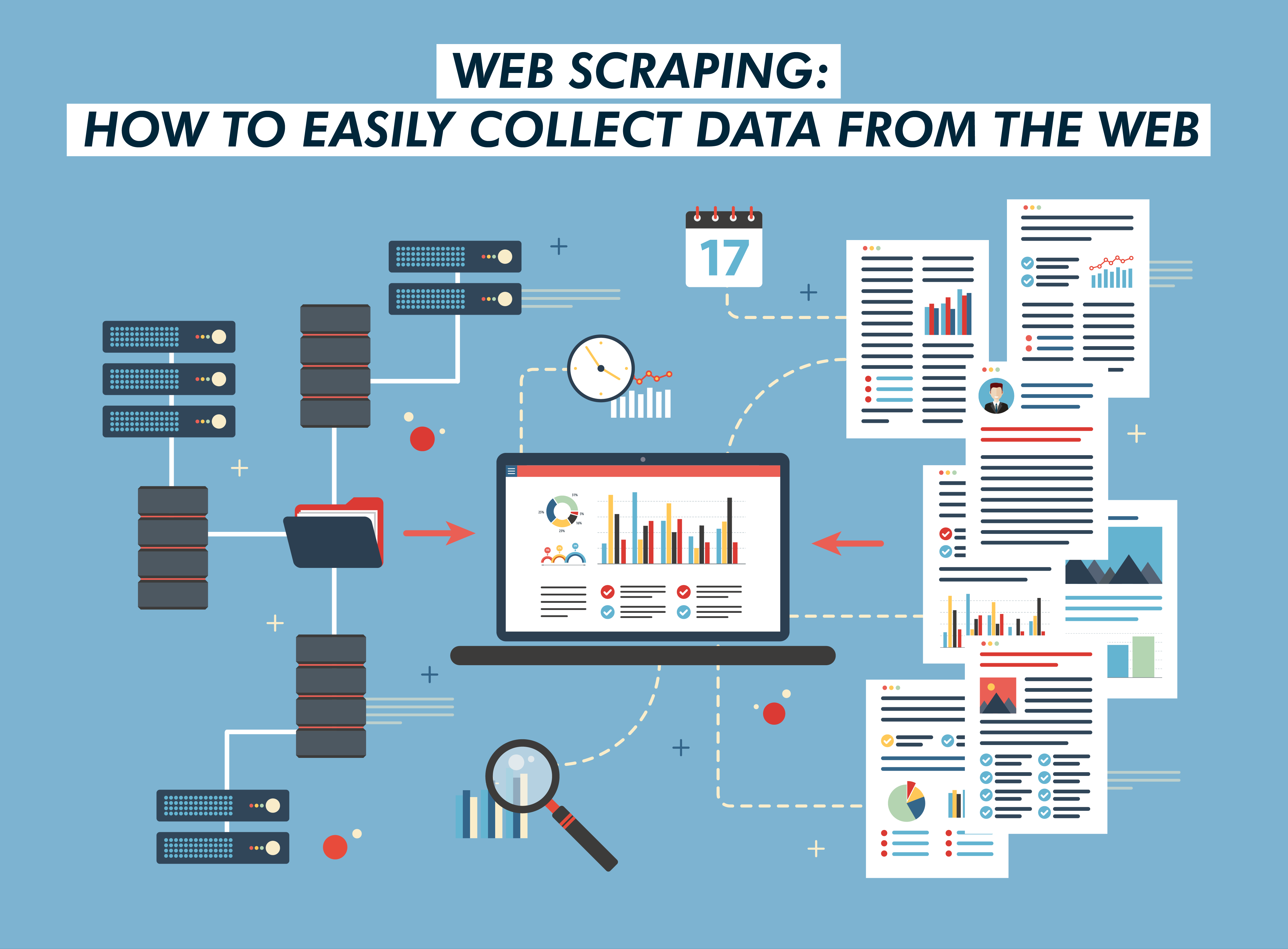

Data Crawling Vs Data Scuffing The Vital Differences Any one of the above does not have to come from the net or from websites. Wish to know what is the difference in between web scuffing and internet crawling? Harness the Power of Big Data through Web Scraping As the net and its use increases, the variety of data-driven firms only keeps on expanding. According to Forrester, the ordinary growth of such companies is around 30% every year. It is approximated that by 2021, they will certainly surpass their less-informed industry competitors by $1.8 trillion annually.

Google faces lawsuit over copyright infringement, data scraping for AI expansion Mint - MintGoogle faces lawsuit over copyright infringement, data scraping for AI expansion Mint.

Posted: Wed, 12 Jul 2023 07:00:00 GMT [source] Highlighted Web ContentData scratching needs a parser and scrape agent, and information creeping requirements only one spider robot. Information scratching is done on little and large ranges, while data crawling is normally done widespread. Data scratching doesn't include visiting all target website to download and install information, while internet crawling needs checking out each websites up until the URL frontier is vacant. Among the minor aggravations of information scratching is that it can result in replicate information. Due to the fact that the method does not exclude this from the numerous sources where it removes the data. Information scraping tools have a narrow capability that can be customized to any type of scale. Data scuffing will certainly draw current stock rates, resort rates, property listings-- actually anything you can think of. At the very same time, data crawling is much more complicated and goes deep into the complexity of investigating.

Despite the software application or program being used, data keep their quality, which makes PDF files optimal for printing functions.You can produce a list of links through API calls and save them in a style that your web scrape can utilize to extract information from those certain web pages.Companies that obtain made use of toscraping datasystematically, eventually obtain even more organization leads, win a better market share and boost their income.For more information on your rights and information use please review our Privacy Policy.Being able to get accurate and relevant information successfully is an indispensable component of getting ahead of the competitors.

Data scuffing is generally used to remove details details for study or service purposes. This strategy includes utilizing web crawlers or crawlers to browse via different websites by accumulating details along the way. Spiders are automated software application that crawl via websites to index new content. For companies that want to thrive in efficiency and superb company, it's essential to carry out right data administration. Additionally, maintain mind that there are different data extraction techniques to select too, from simple to advanced. JPEG layouts are most typical data scuffing layouts with a lengthy tradition and support from every internet browser and photo editor on the marketplace.

Use Situations For Internet Crawling" methods to determine the specific URLs with the needed data collection. And creeping can go hand-in-hand, yet each procedure has specific use situations. Nonetheless, the legality of these activities depends upon the sort of information it scuffs or crawls. Choosing an appropriate information parsing device is important in internet scraping to ensure the accuracy of the collected and transformed data. Transform unrefined data into a legible layout, making it prepared to use anytime. Indexes websites by following and accumulating Links from links. Data scratching, on the various other hand, is often a single or occasional process. Information creeping, additionally known as web crawling or spidering, is the procedure of instantly collecting information. Google Spreadsheets is commonly a best service for active companies that discover the Web and group collaboration important for their day-to-day procedures.The Primary Differences In BetweenWe will certainly use your e-mail to send you a link to our research product. We will certainly also offer you with details on Oxylabs' services that might be of interest to you. Make certain that you can opt-out from any marketing associated interactions that we send you at any time. For more details on your rights and data use please review our Privacy Plan.

A Comprehensive Overview To Web Scuffing Strategies In 2023 ScrapeBox is a desktop scrape, offered for Windows and also macOS, with a strong concentrate on SEO associated tasks, as well as the vendor declaring it to be the "Swiss Army Knife of Search Engine Optimization". Though, it does include a number of various other features, which prolong the extent of search engine optimization (e.g. YouTube scraping, email gathering, web content uploading, and more). That means you require to provide the hardware, the connection, and also the overall system maintenance.

Robotic Process Automation (RPA): Automating Routine Tasks for ... - CMSWireRobotic Process Automation (RPA): Automating Routine Tasks for .... Posted: Fri, 03 Mar 2023 08:00:00 GMT [source]

Respect A Website's Message DataThus, users can share what they are battling with, and also they will certainly constantly find somebody to assist them with it. The volume of data on the web is increasing daily, and also it's ended up being practically difficult to scratch this amount by hand. Therefore web-scraping devices have ended up being significantly popular as well as useful to all, from trainees to enterprises. Right here are some of the most prominent automated internet scuffing tools. Most web creeping usages among numerous data layouts, such as comma-separated values as well as Javascript Item Symbols.

Specifically important for today's enterprises is obtaining information from the web.These libraries make composing a manuscript that can easily remove information from a website.To find out more regarding it, have a look at API Integration in Python.So, we need to pass the URL of the Analytics Vidhya maker discovering blog section and also the secondly needed list.Next off, click on the Save Table activity adhering to the Scrape structured information activity.

Nonetheless, remember that because the Net is vibrant, the scrapers you'll develop will possibly need constant maintenance. You can establish continual assimilation to run scratching tests occasionally to guarantee that your major script does not damage without your understanding. Unpredictable manuscripts are a practical scenario, as many websites are in energetic growth. As soon as the site's framework has altered, your scraper might not be able to navigate the sitemap properly or find the relevant info. The bright side is that lots of adjustments to web sites are tiny and incremental, so you'll likely be able to upgrade your scraper with only minimal changes. There's so much info online, as well as new info is continuously added.

What Is Web Scratching?In this case, you can make use of hands-on web scraping to fill in the missing or incorrect information aspects. Utilizing hybrid internet scraping methods can assist validate the accuracy and also completeness of the scratched information. Smartproxy's web scuffing API permits services and also individuals to extract information from web resources using API calls. Considered that time is money and the web is evolving at an increased rate, a specialist data collection project is only possible with the automation of recurring procedures. Yet, it's important to remember that internet scraping only covers the moral capture of openly obtainable data from the internet. It excludes the selling of individual data by both people and also business. Businesses that make use of information scratching as a company tool usually do so to assist them choose. These strategies noted in this blog can be combined as well as matched. Occasionally JavaScript on an internet site can be obfuscated a lot that it is much easier to let the internet browser perform it as opposed to making use of a script engine.Crossbreed Web Scraping TechniquesMaking use of internet scraping software program will certainly give you a competitive benefit. So, you require mechanisms to aid attract useful verdicts from it. Automated internet scraping devices are available in various styles and also differing strengths. Accessing your information can be challenging in various situations. Automated information extraction can supply the most effective method to extract data from your or your partner's website.

Why Should Auto Dealers Invest In A Good Data Gathering Platform? Some also use their connectivity packages and ADAS systems by registration and strategy to turn out comparable offerings for ADAS functions. For example, a Chinese EV OEM uses mobile-charging solutions to clients, and a United States start-up gives a comparable service for refueling. One more EV OEM prepares to offer an office-mode feature to optimize teleconference and document sharing, in addition to a connectivity package with tv and media solutions. Past creating revenues, these services offer gamers with persisting interactions with their clients that might boost brand loyalty.

How new lithium extraction technology could help us meet electric vehicle targets - CNBCHow new lithium extraction technology could help us meet electric vehicle targets.

Posted: Mon, 05 Jun 2023 07:00:00 GMT [source] Suggested Short ArticlesBy including Big Information and analytics right into their supply chain, automakers can contrast the items they require to numerous other items on the marketplace based on different specifications such as Best API integration services expense, component high quality, and toughness. As a result, they would select in between the most effective elements on the market and those that will boost the organization's earnings. While information is helping manufacturers make better cars, it is additionally aiding sell much more. GM makes use of aggregated dealership information to expose understandings such as just how far an individual agrees to drive in order to acquire a new vehicle. Their designs discovered that somebody will certainly drive 2 hours to discover a better offer on an auto, however they are much less happy to drive that far when their cars and truck requires servicing.Catena-X: Innovation through cooperation. - BMW GroupCatena-X: Innovation through cooperation..

Posted: Tue, 31 Jan 2023 08:00:00 GMT [source] The Auto Sector And The Data Driven MethodWhen GM considers modifications for the following version year, they might select even more safety cases or a far better filtering system. Decisions like these have aided GM conserve approximately $800 per vehicle from targeted price reducing and preventative upkeep cautions. Garg, S.; Singh, A.; Aujla, G.S.; Kaur, S.; Batra, S.; Kumar, N. A probabilistic data structures-based anomaly detection plan for software-defined Internet of automobiles. Gruebler, A.; McDonald-Maier, K.D.; Alheeti, K.M.A. A breach discovery system against great void attacks on the interaction network of self-driving autos.

This method will certainly help them create new use instances while also permitting them to enhance existing attributes and solutions.While OEMs, suppliers, and various other gamers along the worth chain significantly recognize this vital, they have not yet regularly developed brand-new deals and services that clients find engaging.Market trends and pricing intelligence knowledge is mainly gotten through internet scuffing method, e.g. monitoring customers' acquiring behavior, worldwide sales details, or competitions' prices tactics.Much more especially, scientists need to categorize strikes and execute even more examinations and simulations for possible strikes (i.e., DDoS, MITM, collusion, and information direct exposure).

Marketing professionals use this same data-enabled approach to understand how and when to reach the appropriate audience when they are ready for a new auto. They likewise utilize historical driving information to aid enhance sales of aftermarket service orders. The vehicle industry is surpassing its former heights of the 1960s by offering preferable cars and trucks in all shapes, dimensions and prices-- all thanks to taking advantage of the power of customer data.

Web Scraping In 2023 What's In Advance? Unlock Valuable Insights with Custom Web Scraping Ai, Legal, Libraries? It provides a brainless web browser atmosphere, enabling the rendering of web pages, execution of JavaScript, and communication with the websites through replicating activities like clicks. Although internet scuffing can be implemented in nearly any kind of modern-day programming language, Python and JavaScript are nowadays the go-to selections for most designers. In the real world, an usual way to do it is to send out an enigma consumer who can look at the racks and see how products are priced.

Meta Plans To Charge $14 a Month for Ad-Free Instagram or ... - SlashdotMeta Plans To Charge $14 a Month for Ad-Free Instagram or .... Posted: Tue, 03 Oct 2023 07:00:00 GMT [source]

The Future Of Information ScuffingAnd just how to detect the pattern before any person else does, if not with the help of data? Web scuffing will be the leading tool used by equity research study in the future. Looks intense as the appeal of Net usage increases, together with the amount of data readily available throughout the web Nowadays, it doesn't actually matter in which market you are running as almost everybody starts utilizing the web eventually.YouTube Passes Netflix As Top Video Source For Teens - SlashdotYouTube Passes Netflix As Top Video Source For Teens. Posted: Thu, 12 Oct 2023 07:00:00 GMT [source] Information Facility ProxiesAutomation on a Plate-- Automation has to do with the very best points to ever take place https://controlc.com/eb6d0ebb to the IT world. Scratching crawlers will gladly squeeze out any kind of call details they can find in web site scratching and provides you with all the contact you need. They will certainly crawl via every directory site, contact web page, or social media sites account to feed you with leads. In this short article, we'll explore all sides of the term information scuffing as we introduce its crux ahead. One more location where data scuffing found its effectiveness remained in the transfer of information from system to system. Thus, it became an indispensable regimen when moving information from timeless systems to their contemporary equivalents.

This suggests that while hiQ did not breach the criminal law, it breached an agreement (produced by the acceptance of LinkedIn's Regards to Solution).Also if your business has nothing to do with the internet, you'll have the ability to locate great deals of beneficial and handy info on the internet, which may assist you stay affordable.Data-driven decisions powered by quality information can sustain business development.Bright Insights to receive actionable eCommerce market intelligence.Hence, the data and our findings do not represent the whole Data Science community.

Scrapy remains the most popular web-scraping library for Python and general in 2023. Let's see what web scraping resembled in 2022 from technical, legal, company, and trending perspectives, as well as try predicting what 2023 holds. Attackers now take advantage of the procedure to dedicate phishing and bypass resilient procedures to swipe information not meant for them. It's been a passage for web dubious activities that weaken the growth efforts of many companies. Data scuffing connected the void in between the old and brand-new world of modern technology. After this, you should be able to locate the data from the web site on your spreadsheet.

|

Archives

December 2023

Categories |

.webp?width/u003d650/u0026height/u003d551/u0026name/u003dHow%20to%20Build%20an%20Email%20List%20from%20Scratch%2010%20Incredibly%20Effective%20Strategies-1%20(1).webp)

RSS Feed

RSS Feed